Foundation Models - Why Industry 4.0 Needs Its Own

Every AI Model in your factory is already obsolete. Here’s Why. And what the next wave of industrial AI looks like.

26% of the manufacturing workforce is eligible for retirement right now, leaving 1.5 to 2 million roles expected to go vacant by the early 2030s (MIE Solutions / BLS, January 2026). In pulp and paper specifically, workers aged 55 and older grew 17% in just two years between 2021 and 2023 (Bureau of Labor Statistics). The pipeline replacement isn’t coming. The gap is structural, permanent, and getting wider every year.

That’s not a workforce problem. That’s a knowledge extinction event. And the industrial AI tools we’ve built so far are completely unequipped to solve it.

The Problem With Industrial AI Today

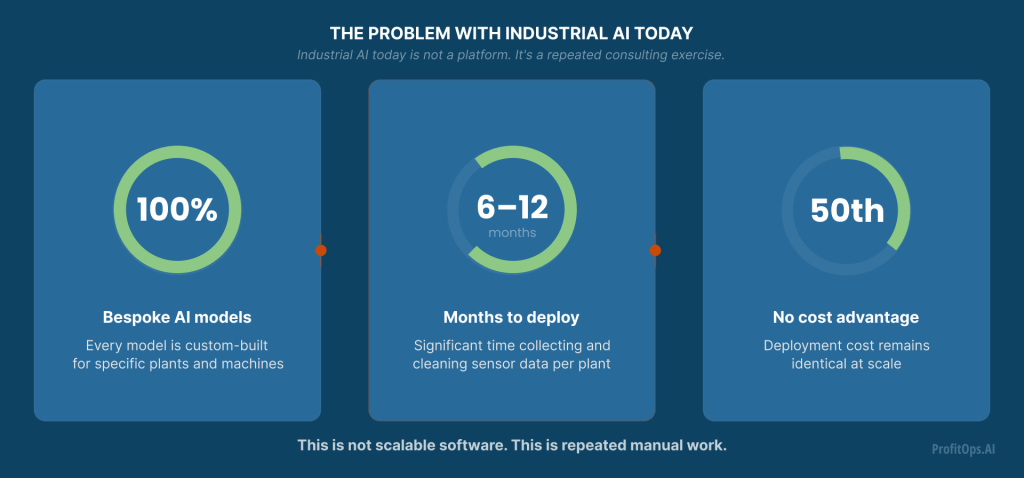

Here’s the uncomfortable truth about how manufacturers use AI: every single model is bespoke.

You hire a data science team, spend 6–12 months collecting and cleaning sensor data, train a model specific to Plant A, Machine 3, Line 2. It works reasonably well until the process changes, new equipment arrives, or production shifts. Then you retrain. Then you repeat the whole exercise for Plant B. And Plant C.

This is bespoke AI — custom-built for one plant, useless anywhere else.

The economics are brutal: the cost of the 50th deployment is nearly identical to the cost of the first. You’re not building a platform you’re running a consulting operation dressed up as a software company.

And critically, all of this work months of effort, millions of dollars produces a model that can only do one thing: predict. It tells you something will go wrong. It doesn’t tell you why. It doesn’t tell you what to change. It doesn’t intervene.

What Foundation Models Changed and Why Manufacturing Hasn't Felt It Yet

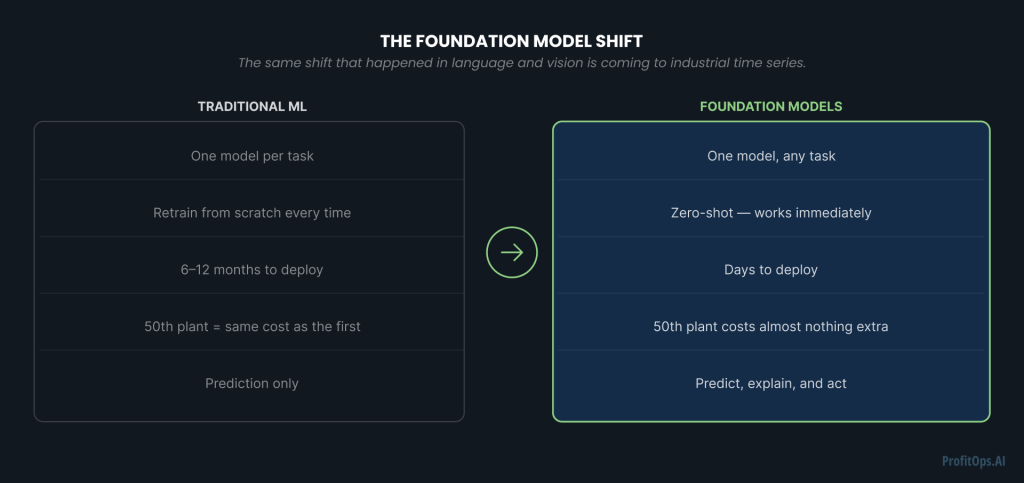

In 2023, something fundamental shifted in AI. GPT-4 didn’t just get better at language it broke the one-model-per-task paradigm entirely. A single model, trained once on massive diverse data, could perform zero-shot across tasks it had never seen. No retraining. No fine-tuning. Point it at a new problem and it works.

The same pattern happened in vision. DALL·E, Midjourney, Stable Diffusion train once, generate anything.

This is the foundation model revolution: train once, deploy everywhere, cost approaches zero at scale.

For manufacturing, this matters more than almost any other industry. Imagine a model pre-trained on sensor data from hundreds of industrial processes — paper mills, chemical plants, steel furnaces, mineral processing facilities. When a new plant deploys it, they don’t retrain from scratch. They point it at their sensor streams and it works. Day one. The 50th plant costs almost nothing to onboard. Similar economics exist in other industrial support areas adjacent to manufacturing, from warehouse automation to material handling. Foundation models offer the same promise for these applications.

That’s not an incremental improvement. That’s a different business model for industrial AI entirely.

But here’s the thing: the foundation model revolution has not yet reached manufacturing in its most important dimension. Yes, TimesFM, Chronos, Moirai, TimeGPT — these are impressive zero-shot forecasting models for time series. They solve the “train once, predict anywhere” problem. But they stop at prediction.

They tell you what will happen. None of them tell you why or what to do about it.

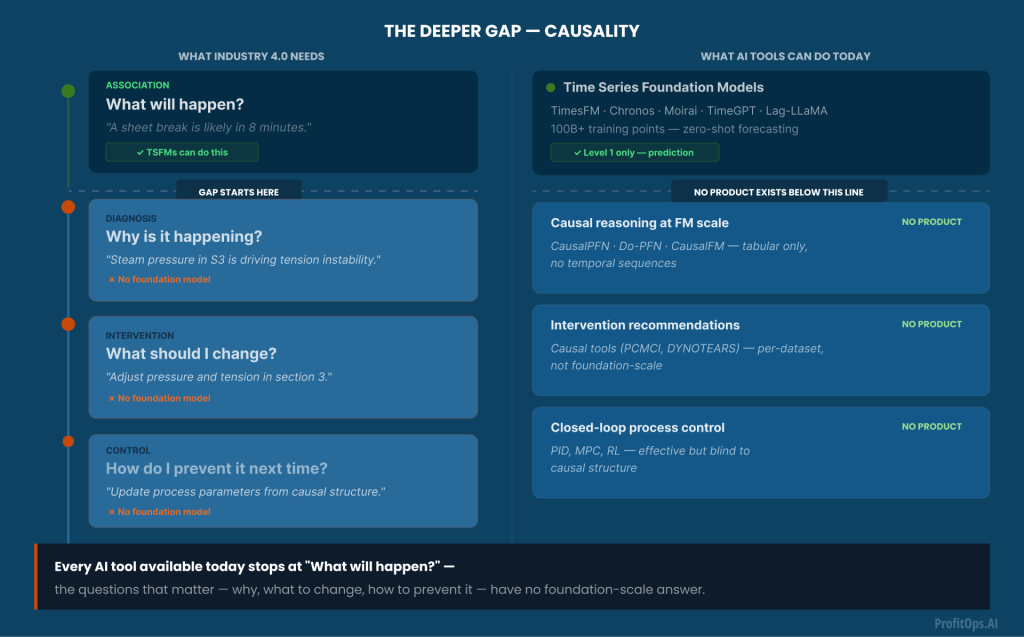

The Deeper Gap: Causality

In other AI domains, causality doesn’t matter as much. If DALL·E generates a slightly wrong image, you generate another. If GPT-4 gives a slightly off answer, you rephrase the question.

In a paper mill running at 800–1,000 metres per minute, a sheet break costs $10,000–$50,000 in lost production, waste, and restart time. You don’t iterate. You need to be right the first time and you need to know why something is happening, not just that it is.

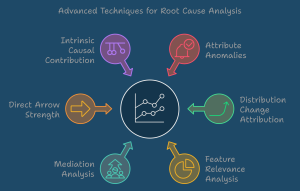

This requires causal reasoning. Not conversational causal reasoning, GPT-4 can tell you about causality in plain English, but it has no formal causal model. Structural causal reasoning: directed graphs, do-calculus, counterfactual inference. The mathematics of “if I change this variable, what happens to that outcome?”

The academic community has started arriving here. CausalPFN (Balazadeh et al., 2025), Do-PFN (Robertson et al., 2025), CausalFM (Ma et al., ICLR 2026) these are the first foundation models for causal inference. Remarkable work. But they operate on static tabular data — cross-sectional snapshots with no concept of time, no temporal sequences, no industrial sensor streams evolving second by second.

Why Now

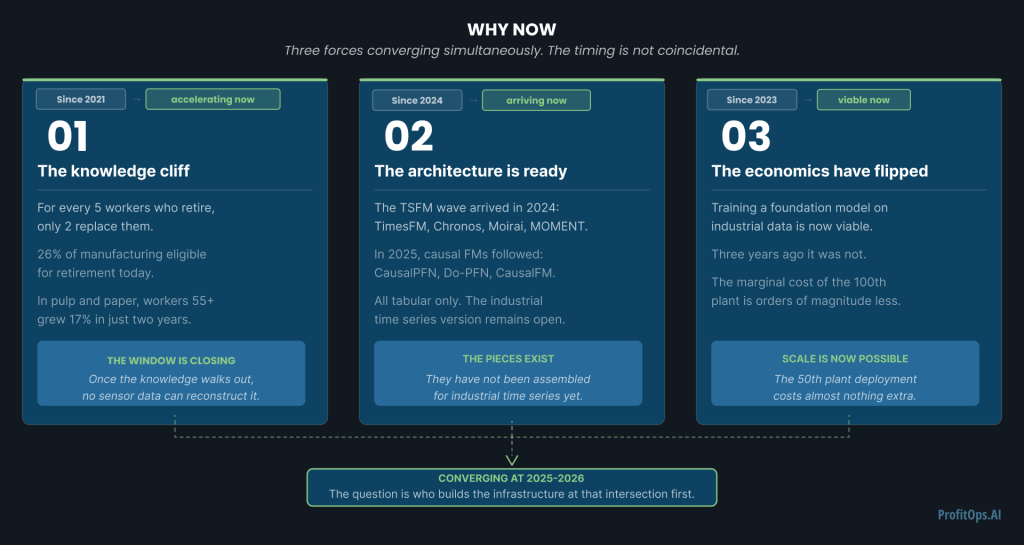

Three forces are converging simultaneously, and the timing is not coincidental.

The knowledge cliff: The 50% workforce retirement projection isn’t a future problem, it’s happening now. The operators who understand why processes behave the way they do are leaving. Once that knowledge walks out the door, no amount of historical sensor data can reconstruct it. The window to capture, encode, and operationalize this expertise is closing.

The architecture is ready: Transformer-based models have proven they scale across domains language, vision, tabular data. The prior-fitted network paradigm has proven that simulation-based pre-training enables zero-shot causal inference on tabular data. The pieces exist. They haven’t been assembled for industrial time series yet.

The compute economics have flipped: Training a foundation model on industrial sensor data from hundreds of plants is now economically viable in a way it wasn’t three years ago. The marginal cost of adding the 100th plant or warehouse to a pre-trained model is orders of magnitude smaller than training a bespoke model per plant.

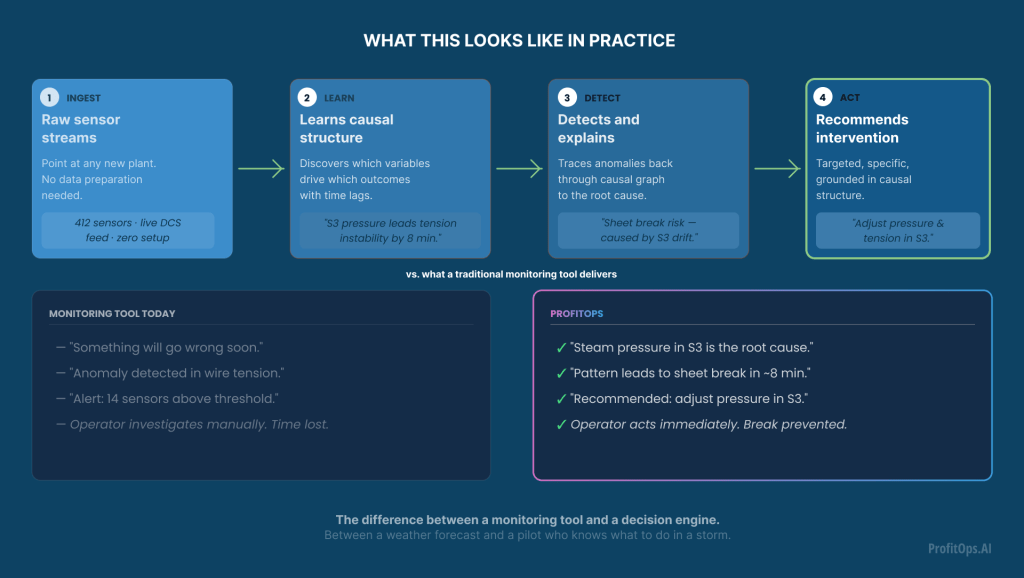

What This Looks Like in Practice

The vision isn’t another predictive maintenance dashboard. It’s something qualitatively different.

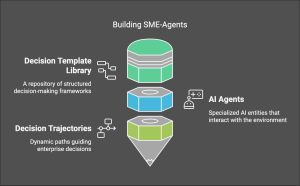

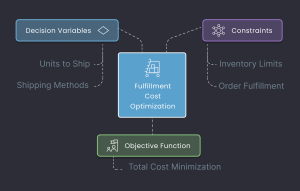

A Causal Time Series Foundation Model for industrial processes accepts raw sensor streams from a new plant and begins operating zero-shot no months of data preparation. It discovers the causal structure of that specific process: which variables drive which outcomes, with what time lags, under what conditions. When an anomaly is detected, it doesn’t just alert — it explains: “steam pressure in section 3 is causing wire tension instability, which will lead to a sheet break in approximately 8 minutes.” Then it recommends a specific intervention: “reduce steam pressure by 4% and increase wire tension by 2% to stabilize.”

This is the difference between a monitoring tool and a decision engine. Between a weather forecast and a pilot who knows what to do in a storm.

The goal isn’t to replace the retiring expert. It’s to make sure their knowledge outlasts them, so the plant keeps running on it long after they’re gone.

That’s the thesis. Not because AI replaces human expertise but because for the first time, we have the architecture to capture, encode, and continuously apply that expertise at foundation scale.

The workforce retirement crisis in manufacturing is real and accelerating. The foundation model revolution is real and accelerating. The gap between what industrial AI does today – predict – and what it needs to do – diagnose, intervene, control is real and well-documented in the academic literature.

These three forces are converging. The question is who builds the infrastructure at that intersection first.

If you’re working on causal AI for industrial time series, or if you’re a manufacturer grappling with exactly this knowledge-retention problem, I’d genuinely like to talk.

Conclusion

Manufacturing’s next crisis isn’t machine failure, it’s knowledge loss. Foundation models that predict and reason causally over industrial time series aren’t just an upgrade; they’re the infrastructure that keeps decades of operator expertise alive, scalable, and actionable long after the experts have left.

Ready to transform the way you solve business challenges?

ProfitOps doesn’t just flag what will fail. It finds the root cause, recommends the intervention, and gets smarter with every plant you add so your operations outlast the experts who built them.

Shravan Talupula

Founder, ProfitOps